The best AI client for Mac

Instantly switch between 300+ AI models, from a single native app.

feature-packed · native performance · private by default

Helping people work smarter at

Everything you need to run AI on your Mac

Best-in-class native macOS app: built using SwiftUI and AppKit.

Familiar UI, Universal Shortcuts Keys, and super-fast Apple M1/M2/M3/M4 performance.

- Your entire AI stack in one app.

- Use OpenAI, Anthropic, Google, Mistral, Azure, Bedrock, and local models in one unified workspace. Switch instantly without touching another app or browser tab.

- Serious workflow tools for power users.

- Work with projects, multi-chat threads, forking, and reusable agents to keep everything structured. BoltAI scales from quick prompts to complex long-running workflows.

- Multimodal intelligence built in.

- Analyze PDFs, screenshots, code, UI captures, and research documents with vision-enabled models. Get clear explanations, summaries, and structured results instantly.

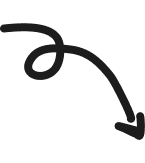

- Fine-grained control over every generation.

- Tune temperature, max tokens, top-p/top-k, penalties, and your system instructions with precision. Get exactly the style and behavior you want from any model.

- Extend with tools, skills, and custom knowledge.

- Use code execution, MCP tools, and attached documents to build richer agents. Automate tasks, generate documents, or extract data without leaving the app.

- Privacy you can trust.

- Chats are stored locally and never used for training, and API keys can be encrypted with your passphrase. BoltAI keeps your work private, secure, and fully under your control.

Work faster anywhere

Bring AI to whatever you’re doing with a single shortcut. BoltAI pops up instantly, ready to explain code, rewrite text, summarize a webpage, or help with whatever’s on your screen — no context switching.

Apple intelligence, done right.

- Global shortcut.

- Open BoltAI instantly over any app with a single keystroke — no context switching, no friction.

- Screenshot → Answer.

- Capture your screen and get explanations, fixes, or summaries in seconds.

- Dictation & inline editing.

- Speak your requests or edit text on the fly for effortless, hands-free productivity.

Everything in one AI workspace

On all your devices

Use BoltAI on your Mac, iPhone, and iPad with the same chats, agents, and projects synced securely through the cloud.

Local models, zero latency

Run powerful LLMs locally via Ollama and LM Studio for fast, private responses without per-token costs.

Privacy built in

Chats are stored locally, API keys can be encrypted with your passphrase, and nothing is ever used to train models.

Extend with tools & MCP

Connect BoltAI to local and remote MCP servers, toggle tools per agent, and keep your AI workflows configurable in code.

{

"mcpServers" : {

"filesystem" : {

"args" : [

"-y",

"@modelcontextprotocol/server-filesystem",

"~/Data"

],

"command" : "npx"

}

}

}

“My company provides critical software services for customers such as Spotify, Google, Coinbase, Binance, and many others. We use AI in our workflows (custom developed as well as LLMs) but there was no tool before BoltAI that tightly integrated LLMs into my workflow. I always had to click out, leave my current task, go to ChatGPT or similar and come back which broke my flow. BoltAI makes everything effortless. It's a superpower.”

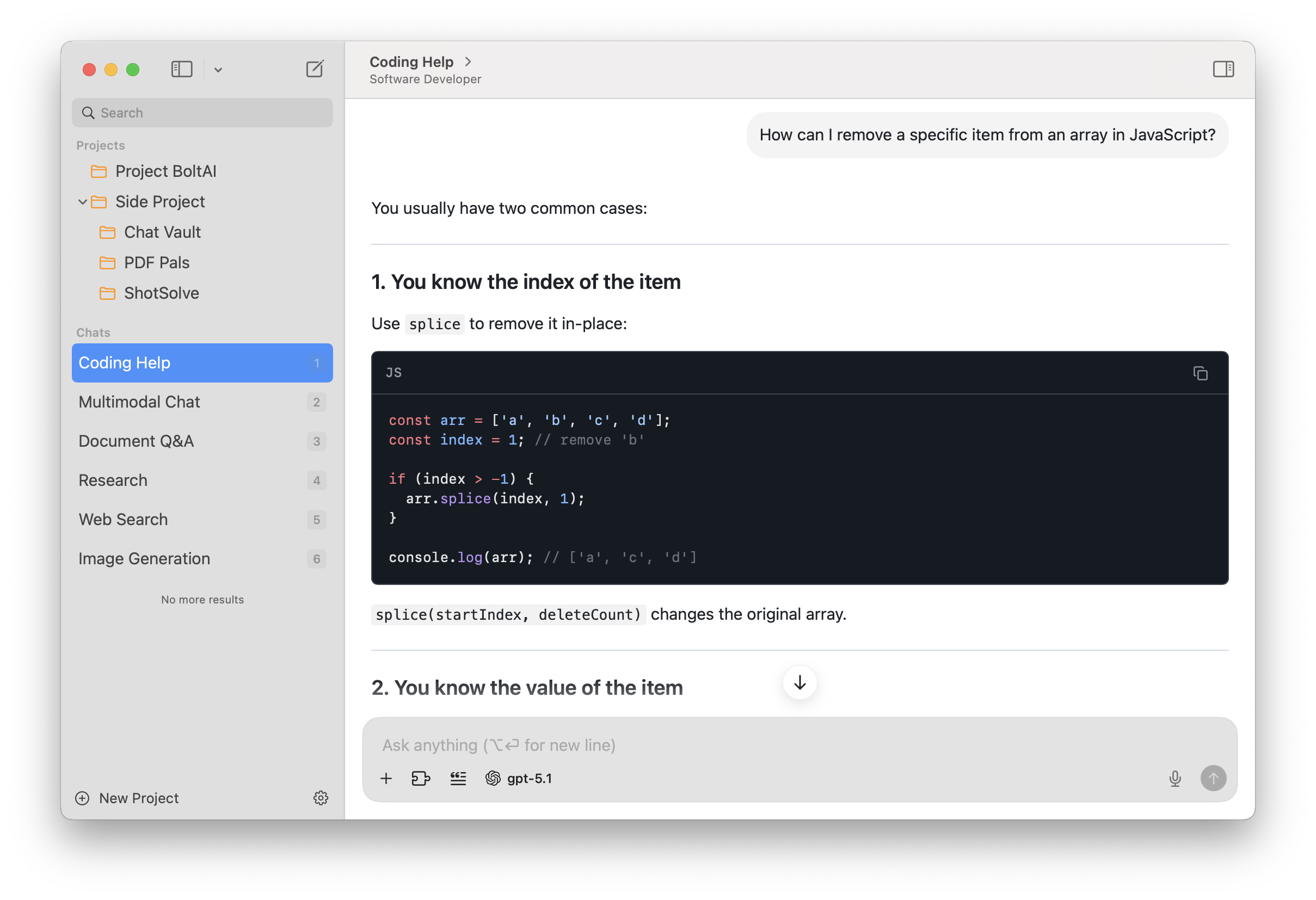

A powerful workspace for serious users

BoltAI brings together pro tools, advanced models, structured workflows, and deep customization — all in a clean, native Mac experience built for speed.

- Advanced agents

- Build reusable agents with custom instructions, knowledge, and tools for consistent high-quality outputs.

- Context profiles

- Switch between tailored configurations for coding, writing, research, or analysis instantly.

- Multimodal analysis

- Work with screenshots, PDFs, and documents. BoltAI extracts structure, summarizes, and explains.

- Multiple model providers

- Use OpenAI, Anthropic, Google, Mistral, Azure, Bedrock, and local models in one seamless workflow.

- Fork & branch chats

- Explore alternative ideas without losing your original thread. Perfect for comparison and iteration.

- Prompt library

- Save your best prompts once and reuse them anywhere. Build a personal toolkit for recurring tasks.

- Attachment-driven context

- Attach files, research, or reference documents. BoltAI manages context automatically and intelligently.

- Advanced LLM controls

- Fine-tune temperature, token limits, top-p/top-k, and penalties for precise, predictable results.

Latest release

BoltAI 2.12.0

3 days ago

Build 67 This release is focused on appearance, readability, and UI customization. You now get more control over how BoltAI looks, how chat content is presented, and how common actions behave, along with a set of reliability improvements across providers and connectors.

New

- Added chat accent color controls so you can personalize conversation styling.

- Added Config font size in the left sidebar so you can make the sidebar easier to read.

- Added configurable message actions, including separate copy options for Markdown and rich text.

- Added styled workflow output formats for easier clipboard use.

- Added Project Instructions editing in its own dedicated window.

- Added a Continue generating flow for replies that stop because they hit a model output limit.

- Added Fastmail to the hosted connector catalog.

- Added separate Featurebase reader and writer connectors while keeping existing Featurebase connections working.

- Added support for manually entering Bedrock model IDs.

Improved

- The chat header is simpler and more stable, with agent selection moved into the composer.

- The prompt picker is easier to use, and creating a prompt now routes directly to Prompt Library.

- Chat: User preference for "Show full message" is now better supported, with improved message expansion behavior and spacing.

- BoltAI now reuses the selected Codex CLI model when generating chat titles.

- AI service setup does a better job explaining provider verification failures.

- More providers now support turning reasoning off directly from the compose controls.

- Custom OpenAI-compatible providers are more tolerant of non-standard provider responses.

- Connector startup and tool-call handling are more reliable, especially for local tools and mixed argument types.

Fixed

- Fixed regular text copy in chat so standard selection and copy works as expected again.

- Fixed Recently Deleted so project chats stay visible there, with clearer permanent deletion dates.

- Fixed long user messages not expanding correctly.

- Fixed onboarding flows where some users could not complete setup when using a custom provider.

- Fixed Bedrock profile sends that could fail when no API key was stored locally.

- Fixed model catalog refreshes that could be triggered unnecessarily after renaming a service.

- Fixed hosted connector sign-in issues that affected Fastmail OAuth registration.

- Fixed edit-message window sizing so the editor fills resized windows correctly.

- Fixed spacing for the Show full message control.